How the Drivers of Conservation Attitudes Differ Across 12 Countries

The National Geographic Society commissioned a $500K international survey of 12,000 adults across 12 countries to understand how people think, feel, and act on conservation. I led the analysis and reporting, using predictive models to move past surface-level opinion polling and uncover the psychological mechanisms that actually drive pro-environment attitudes and behaviors in each market. I then presented the findings and strategic recommendations to the NatGeo president and senior directors.

The Challenge

National Geographic wanted to understand global public opinion on nature and the environment. That's a decent starting point, but polling alone doesn't always give you what's most useful. Knowing that, say, 78% of people in the UK are concerned about air pollution is fine, but it would be even better to be able to know whether air pollution concern is actually connected to the outcomes NatGeo cares about (e.g., whether people value nature, trust conservation organizations, or change their behavior). And it definitely doesn't tell you whether that connection works the same way in the UK as it does in Kenya or Indonesia.

The survey had been fielded by Ipsos across 12 countries (USA, Mexico, Brazil, UK, South Africa, Kenya, China, South Korea, India, Australia, UAE, and Indonesia), with ~1,000 respondents per country. NatGeo needed someone to make this giant pile of survey data strategically useful. That meant going well beyond crosstabs and bar charts.

The core question wasn't "what do people think about nature?" It was "what predicts whether they'll value it, and does the answer change depending on where they live?" The gap between those two questions is where the real strategic value lives.

Analytic Approach

I structured the analysis at two levels. The first was descriptive, which means examining how attitudes, trust, and behaviors varied across demographic groups within and between each country. There were some interesting insights there, but the second level was predictive, which is where more of the fun stuff is. For each of the 12 countries, I built a model that basically asked: if I know someone's demographics, moral values, environmental concern, perceived knowledge, sense of personal efficacy, and a dozen other variables, how well can I predict whether they'll score high on valuing nature? And which of those variables is doing the most work?

Why Relative Weight Analysis

The technique I used was Relative Weight Analysis, which is superior to standard multiple regression for this kind of question. Standard regression coefficients can be misleading when predictors are correlated with each other (which they almost always are in survey data). Relative Weight Analysis partitions the model's total explanatory power and assigns each predictor its fair share of the variance explained. This gives you a clean, interpretable ranking of what matters most, expressed as the percentage of variance in the outcome that each predictor accounts for.

Running this separately for each country is what made the analysis powerful. Two countries might show identical average scores on valuing nature, but the models reveal that the underlying reasons are completely different. That distinction is what turns research into strategy.

12 Countries

USA, Mexico, Brazil, UK, South Africa, Kenya, China, South Korea, India, Australia, UAE, Indonesia. Fielded online by Ipsos, ~1,000 adults per country.

Two Outcome Variables

Valuing Nature (attitudinal index) and Pro-Environmental Behavior (behavioral index). Separate predictive models built for each outcome in each country.

17 Predictor Variables

Demographics (age, gender, education, income), moral values (harm, fairness, purity), environmental concern, perceived knowledge, efficacy, social norms, political engagement, and more.

Contextual Triangulation

Findings from these survey models were cross-referenced with existing experimental research and domain expertise in environmental communication to strengthen causal confidence.

Key Findings

1. Concern and Knowledge Predict Valuing Nature Everywhere

Across all 12 countries, environmental concern and perceived environmental knowledge consistently ranked as top predictors of valuing nature. In the US, concern alone explained 17.2% of the variance in valuing nature, and knowledge explained another 12.6%. In Brazil, those numbers were 14.2% and 8.8%. In the UK, 20.9% and 10.8%. This pattern held globally, and it's worth pausing on because it has real strategic implications.

The intuition here is straightforward. People who feel knowledgeable about environmental threats and who feel viscerally concerned about them tend to value nature more. But the practical upshot is that these are potentially moveable levers. You can design campaigns that increase environmental knowledge and heighten concern, and existing experimental research supports the idea that doing so actually shifts attitudes. The predictive models confirm that those levers are connected to the right outcome.

Communication campaigns should combine informational content (building perceived knowledge) with emotional engagement (building concern). Existing experimental evidence suggests that either lever alone is less effective than the combination. This dual pathway held across all 12 markets.

2. But the Role of Moral Values Varies Dramatically

Here's where things get interesting. Although concern and knowledge were consistently strong everywhere, the role of moral values (harm, fairness, purity) in predicting conservation attitudes diverged wildly across countries. In China, moral values collectively explained 32% of the variance in valuing nature. In the UAE, 31%. In South Korea, 26%. But in South Africa, moral values explained only 10%. In the US and UK, around 11%.

This is a threefold difference in predictive power for the same set of psychological constructs. What it means practically is that framing conservation as a moral issue (about preventing harm, ensuring fairness, or preserving purity) will land very differently depending on the market. A campaign built around moral messaging might be extremely effective in China or India and significantly less so in Australia or South Africa.

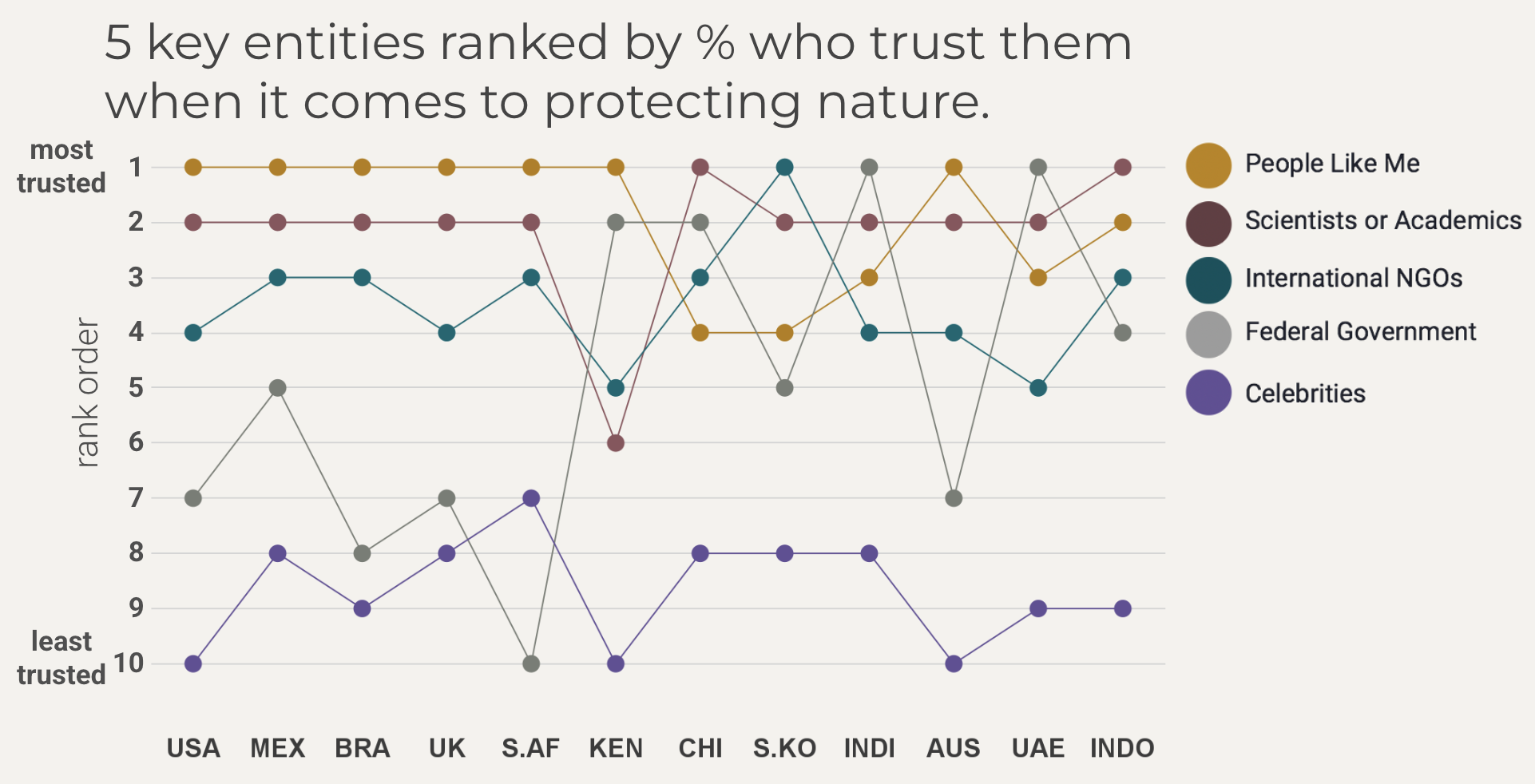

3. Who People Trust Tells You Who Should Deliver the Message

In 11 of 12 countries, scientists and academics were trusted by a majority to do what is right regarding nature. Celebrities and wealthy individuals were reliably at the bottom of the trust rankings. This is useful baseline knowledge for any organization planning a communications campaign.

But the more strategic finding was how much trust in the federal government varied. In India, 76% trusted their government on protecting nature. In South Africa, only 17% did. In Australia, it was 26%. This massive variation means that government partnerships or government-endorsed messaging will be perceived entirely differently depending on the country.

High Government Trust

India (76%), China (74%), UAE (71%), Kenya (69%), Indonesia (63%). In these markets, government-aligned messaging carries credibility. But interestingly, perceived government responsibility was often lower than trust, meaning people trusted the government without necessarily expecting it to lead.

Low Government Trust

South Africa (17%), UK (20%), US (21%), Australia (26%), South Korea (27%). In these markets, government-fronted campaigns risk backlash. NGOs, scientists, and peer-driven messaging ("people like me") are better positioned as trusted voices.

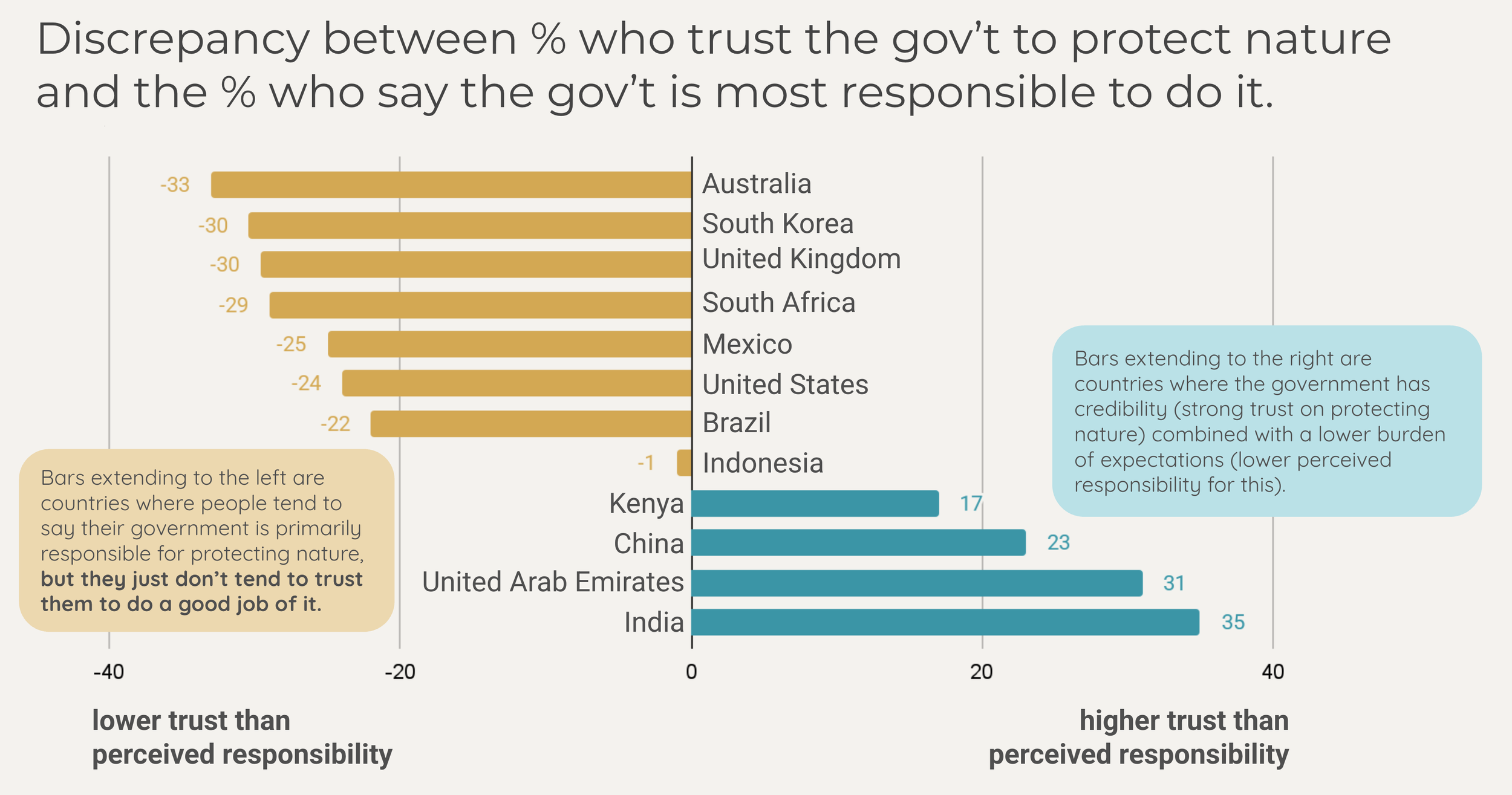

4. The Trust-Responsibility Gap Reveals Strategic Positioning

One of the most actionable findings came from comparing two questions side by side. One question asked who people trust to protect nature. The other asked who they think is most responsible for protecting nature. The gap between these two reveals where there's frustration (high responsibility, low trust) and where there's untapped goodwill (high trust, low perceived responsibility).

In Australia, people assigned their government high responsibility for protecting nature but gave it among the lowest trust scores in the study, a gap of 33 percentage points. That's a population that expects government action but doesn't believe it will happen well. In India, the pattern reversed: high trust in the government but lower attribution of responsibility, a gap of +35 points in the other direction.

For National Geographic, this analysis was directly relevant. International NGOs occupied a specific position in this landscape, and the position was different in every country. In Mexico, 64% trusted international NGOs, but only 22% saw them as responsible. That's a high-trust, low-responsibility profile, meaning NatGeo had credibility without the burden of unmet expectations. But in the US, only 40% trusted international NGOs, and just 16% assigned them responsibility. That's a weaker starting position requiring more foundational trust-building.

NatGeo's strategic positioning needed to be calibrated to each market. In countries where NGO trust was high but perceived responsibility was low (e.g., Mexico, Brazil), NatGeo could leverage existing credibility immediately. In countries where NGO trust was lower (e.g., US, South Korea), the priority should be building familiarity and rapport, particularly among higher-education, higher-income segments where NGO trust tended to be stronger.

5. Demographics Don't Tell You Much, But Behavior Predictors Do

One of the clearest findings across all 12 countries was that basic demographics (age, gender, education, income) were consistently weak predictors of both valuing nature and pro-environmental behavior. In the US model, for instance, all four demographic variables combined explained less than 1% of variance in valuing nature. The remaining 48% came from psychological and attitudinal variables.

This has a practical implication that many organizations get wrong. Demographic targeting (e.g., "young educated women are our audience for this campaign") is unlikely to meaningfully segment people on conservation attitudes. Psychographic targeting based on concern, knowledge, moral values, and efficacy beliefs will be far more effective. The predictive models in each country identify exactly which psychographic variables to prioritize.

In Brazil, personal efficacy was the strongest predictor of pro-environmental behavior (6.2% of variance). In the UK, environmental concern dominated (11.2%). In the US, concern and valuing nature were the two strongest (9.2% and 8.2%). The mechanism connecting attitudes to action isn't universal. Each country has its own bottleneck, and the models identify where it is.

Translating Findings Into Strategy

The final report delivered country-specific strategic recommendations for all 12 markets. But three themes cut across the full set and shaped the overall strategic direction I presented to NatGeo.

Lead with trusted messengers, and those messengers vary by market. Scientists were broadly trusted, but the operational question was whether to partner with government entities, NGOs, or peer-driven campaigns. The trust data provided a clear answer for each country. In low-government-trust markets like Brazil and Australia, NatGeo's own brand and scientific credibility should be front and center. In high-government-trust markets like India and the UAE, government partnerships could amplify reach.

Match the moral frame to the market. In countries where moral values strongly predicted conservation attitudes (China, UAE, South Korea, India), campaigns should explicitly frame environmental issues in terms of harm prevention, fairness, or purity. In countries where moral values were weaker predictors (US, UK, South Africa, Australia), informational and concern-based messaging is a better fit.

Close the attitude-behavior gap by building efficacy, not just awareness. In every country, pro-environmental attitudes far outpaced pro-environmental behavior. The predictive models consistently identified personal efficacy as a key bridge between caring about nature and actually acting on it. Campaigns that only raise concern without also building a sense of "I can make a difference" are leaving impact on the table.

Methodological Reflection

A few things worth flagging about the design and its limitations. The data were collected online by Ipsos, which means representativeness varies by country. In countries with high internet penetration (US, UK, Australia, South Korea), the samples are reasonably representative. In countries where internet access is concentrated among urban, educated, and higher-income populations (e.g., Kenya, Indonesia, India), the findings are less generalizable to the full national population. I was transparent about this in the report, and it factored into how confidently I made recommendations for each country.

The predictive models explain meaningful but incomplete portions of variance (R² values typically between .28 and .51 across countries and outcomes). This is normal and expected for survey-based models with psychological predictors. The unexplained variance represents unmeasured variables, context-specific factors, measurement error, and genuine randomness. The models aren't claiming to fully explain conservation attitudes. They're identifying which measured variables are the most powerful levers, and that's exactly what a strategic client needs to know.

The most important caveat is about causality. Survey data on its own can't establish that concern causes people to value nature, only that the two are strongly linked. I addressed this by triangulating with existing experimental literature. Where controlled experiments have already demonstrated causal effects (e.g., efficacy messaging increasing pro-environmental behavior, moral framing shifting attitudes), I was more confident in translating the predictive models into causal strategic recommendations. Where that experimental evidence was thinner, I flagged the uncertainty and recommended follow-up experiments.

If I were extending this work, the natural next step would be randomized controlled experiments testing the specific messaging strategies that the models suggest. The survey identifies the targets. Experiments would confirm whether hitting those targets actually moves the needle. I included this as a "Future Directions" recommendation in the report.