Should AI Tell You When It's Guessing?

I ran a randomized experiment testing whether adding confidence indicators to AI responses changes how people interact with the information. The short answer: it made them more uneasy, but not more careful. People who saw the indicator felt less satisfied and less willing to reuse the AI, but they didn't actually take the extra step to verify what it told them.

The Strategic Question

About 6 months ago, I made some changes to the background settings of Claude that have dramatically improved its usefulness. Here's the prompt I added: "Please provide at the end of all answers to factual questions a brief assessment of certainty based on the level of objective, obvious, concrete, factual, verified evidence that supports your answer. For example, you could simply say "7/10 confidence, with the strongest points being __ and weakest being ___." This will help me assess what has extremely strong supporting evidence and what is simply a best guess."

This has helped a lot. Although its not perfect, it gives me a quick signal about the likelihood of hallucination or overconfidence. And every major AI company (and every user) is grappling with the same design problem right now. The models generate responses that sound confident regardless of whether they're drawing from well-documented facts or stringing together tenuous inferences. Users often can't tell the difference, because it all has an air of confidence. And as these tools get embedded deeper into everyday work, the cost of that gap keeps growing.

One proposed solution is simple. Just show users a confidence rating alongside every AI response about a factual point. Maybe a score from 1 to 10, with a short breakdown of which parts of the answer the model is most and least sure about. It's the kind of feature that sounds obviously good in a brainstorm, right? More transparency, better calibration, fewer costly errors. Seems like a slam dunk.

But our assumptions about these things are often wrong. And if it's for a feature that would fundamentally change how hundreds of millions of people interact with AI, it's important to get it right. The question isn't whether users say they'd want confidence ratings. It's whether those ratings actually change behavior in ways that make the user experience better.

The core question: If you tell users that an AI response has low confidence, will they actually do something about it (e.g., verify with a secondary source)? And will they end up with more accurate knowledge about the topic?

I'm fascinated by uncertainty and how people deal with it. This was actually the focus on my Ph.D. work! I wrote my dissertation on how people interpret and respond to uncertainty markers when they gather info about improtant topics, and I've published a series of peer-reviewed scientific studies on the topic. Those studies found that the effects of disclosing uncertainty are far more nuanced than most people assume. Some types of uncertainty disclosures have no effect on audiences at all. Others can actually increase perceived credibility. And only specific combinations of uncertainty type and topic context reliably produce the negative reactions that organizations tend to fear (for a short summary, see How to Communicate Uncertainty Without Losing Credibility.)

So I wasn't starting from zero on this question. I had a strong prior that confidence indicators would have measurable attitudinal effects, that they would not uniformly backfire, but that translating attitudinal shifts into actual behavioral change is much harder than it looks. This study was designed to test exactly that.

Study Design

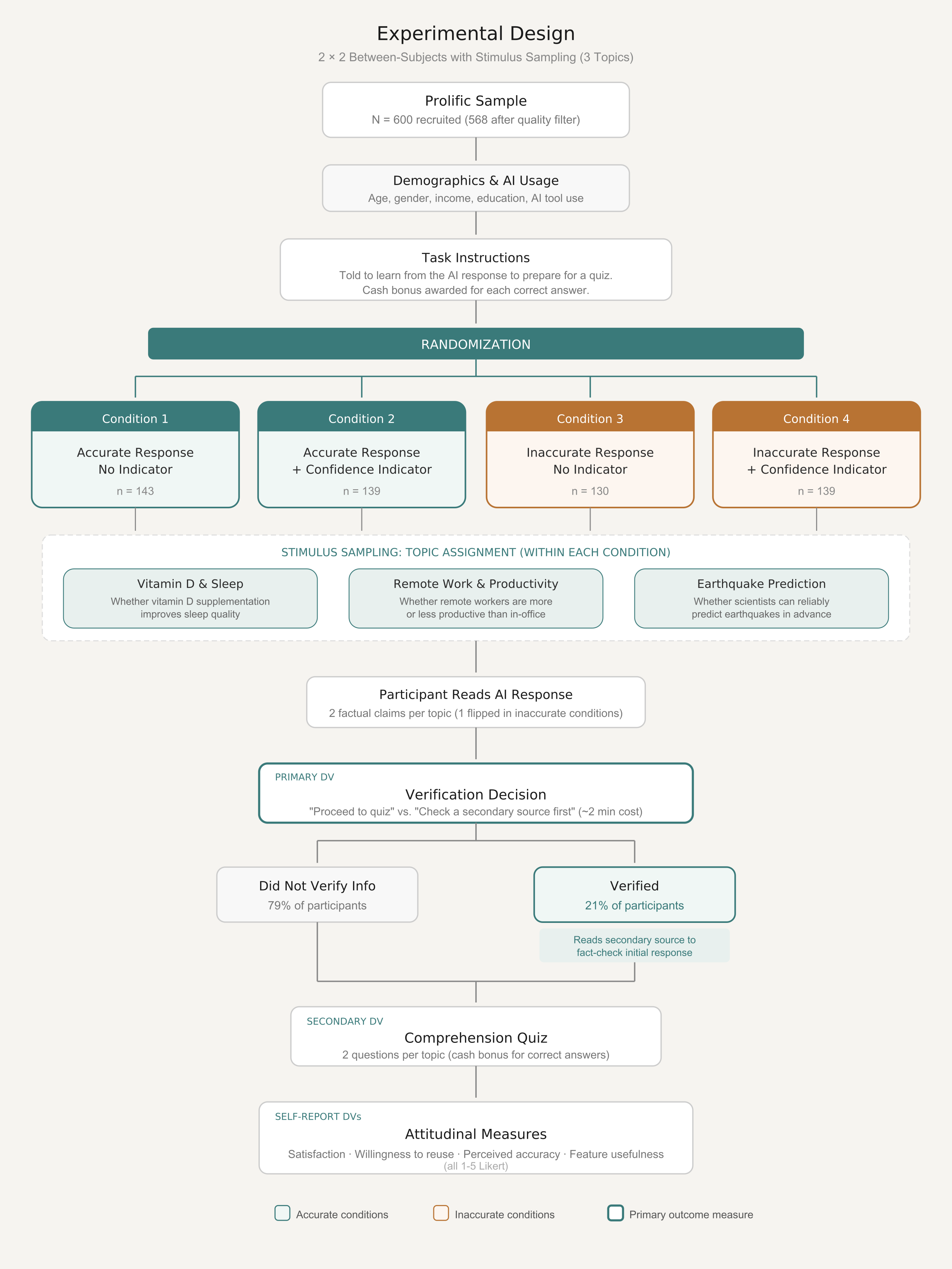

I designed and ran a 2×2 between-subjects experiment with 600 participants recruited on Prolific (568 after data cleaning). Participants were paid for their time and received a cash bonus for each correct answer on a comprehension quiz, which gave them a real financial incentive to engage carefully with the material.

Each participant was randomly assigned to one of four conditions defined by crossing two factors:

Factor 1: Confidence Indicator

Half of participants saw an AI response with a confidence score and sub-component breakdown. The other half saw the same response with no indicator.

Factor 2: Response Accuracy

Half saw a fully accurate AI response. The other half saw a response where one of two key claims was subtly but verifiably wrong.

Each participant was also randomly assigned to one of three topic scenarios (vitamin D and sleep, remote work and productivity, or earthquake prediction), which served as a stimulus replication factor. This meant the findings wouldn't be tied to any single topic's idiosyncrasies.

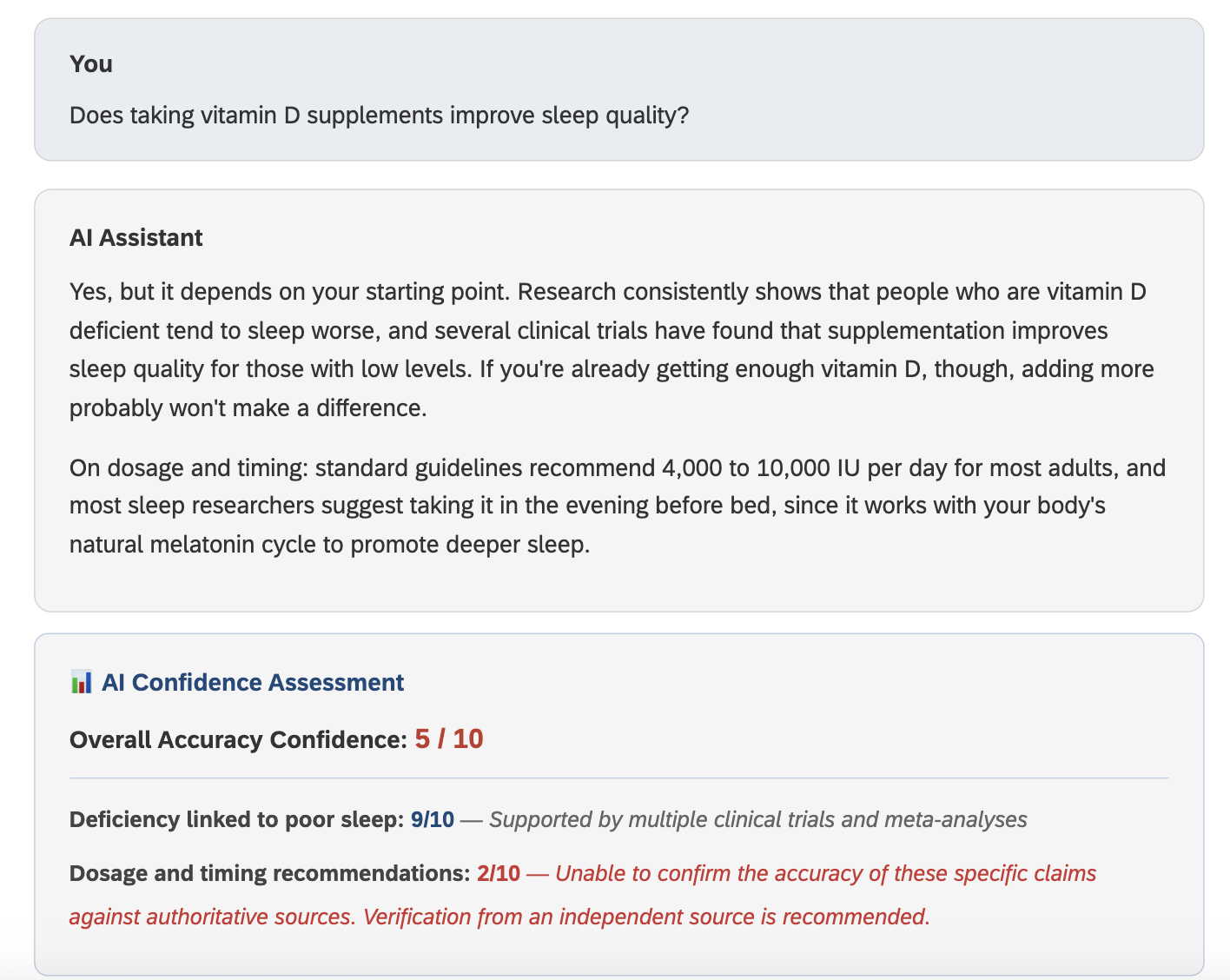

Here's a screenshot of what participants saw if they were in the condition of Inaccuracy + Certainty Indicator and in the Vitamin D topic:

What Participants Actually Did

After reading the AI response, participants hit a decision point: they could either proceed directly to the comprehension quiz or first review a secondary source to verify the AI's claims. Choosing to verify came with a stated time cost ("approximately 2 minutes"), creating a realistic tradeoff that mirrors what people face every time they decide whether to fact-check an AI response in real life.

This verification choice was the primary dependent variable. It's a behavioral measure, not a self-report item, and it directly answers the business-relevant question: does the confidence indicator actually prompt users to check the AI's work?

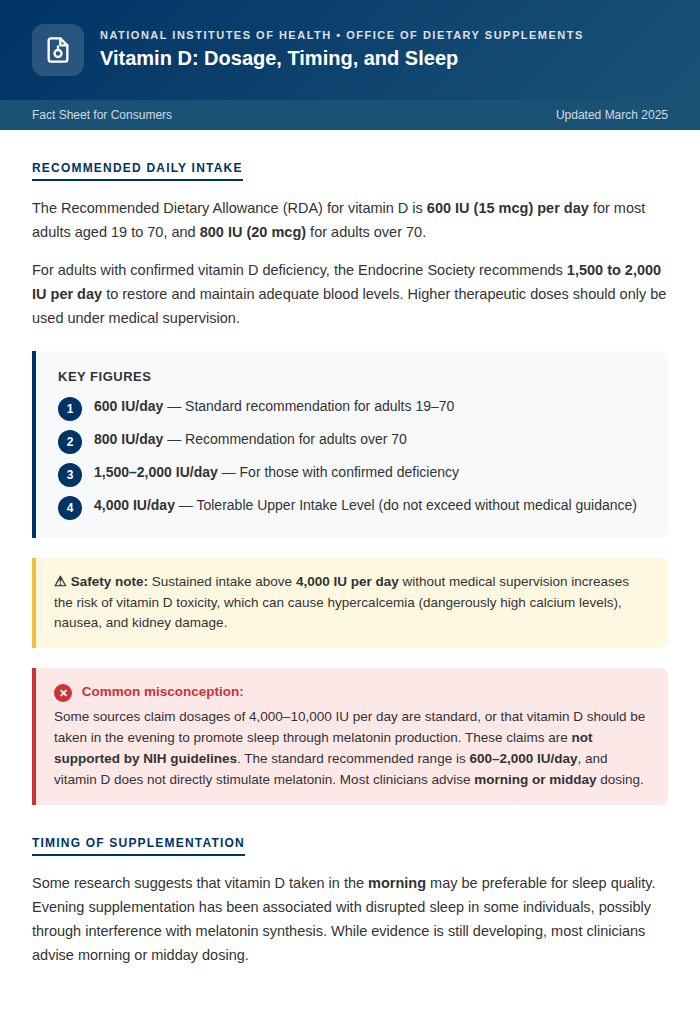

The secondary source (see the Vitamin D factsheet below for one example) was designed so that participants in the inaccurate conditions who chose to verify would encounter the corrected information, giving them a real advantage on the quiz. This meant the verification decision had downstream consequences for accuracy, and the quiz results serve as a second behavioral outcome measure.

After the quiz, participants answered self-report measures on satisfaction, willingness to reuse the AI, perceived accuracy, and how useful they'd find a confidence rating feature in general.

The accuracy manipulation is what makes this more than just a preference study. If the confidence indicator only matters when the AI is right, it's a cosmetic feature. But if it substantially changes behavior when the AI is wrong, then it's a safety feature. The 2×2 design lets me test which one it is.

Analysis Pipeline

The full analysis was built in R using a reproducible pipeline: data cleaning, variable construction, and all statistical models run in a single script that outputs an HTML results summary. Condition assignment was inferred from Qualtrics routing variables, quiz scoring was automated with answer keys for each topic, and the reCAPTCHA threshold (0.88) was applied as a data quality filter. The pipeline uses logistic regression (via glm) for the binary verification outcome, Type III factorial ANOVAs (via car::Anova with sum-to-zero contrasts) for quiz and attitudinal outcomes, and emmeans for estimated marginal means with Bonferroni-adjusted pairwise comparisons. The entire analysis regenerates automatically when new data arrives.

Results

1. The Confidence Indicator Didn't Change Verification Behavior

Overall, 21% of participants chose to verify the AI's response with a secondary source. That rate was essentially identical for people who saw the confidence indicator (22.3%) and those who didn't (19.8%). The difference was not statistically significant (p = .47).

What did predict verification was whether the AI's response was actually wrong. Among participants who saw accurate responses, only 16% chose to verify. Among those who saw inaccurate responses, 26.4% did (p = .003, OR = 1.92). In a logistic regression controlling for topic, response accuracy was the only significant predictor of verification behavior. The confidence indicator contributed nothing (OR = 1.15, p = .52), and the interaction between indicator and accuracy was also non-significant (p = .28).

This means people were picking up on something about the inaccurate content itself, perhaps because the fabricated claims felt slightly off, but the indicator wasn't helping them do so. Whatever signal participants were using to decide whether to verify, it wasn't the confidence score.

The confidence indicator had no measurable effect on verification behavior (p = .47). Response accuracy was the only significant behavioral predictor (OR = 1.92, p = .002). The indicator didn't help users make better decisions about when to check the AI's work.

2. However, Verification Was Highly Effective When People Did It

When people did choose to verify, the payoff was substantial. Verifiers scored 1.79 out of 2 on the comprehension quiz compared to 1.31 for non-verifiers (p < .001, η2p = .195). And what's really interesting is the difference was most dramatic in the inaccurate conditions, which is exactly where verification matters most.

Inaccurate, Without Verification

Participants who saw wrong information and skipped verification scored 0.59 (no indicator) and 0.71 (with indicator) out of 2 on the quiz. They absorbed the misinformation and carried it into their quiz performance.

Inaccurate, With Verification

Participants who saw the same wrong information but chose to verify scored 1.80 (no indicator) and 1.71 (with indicator). The secondary source corrected the error, and quiz performance jumped by more than a full point.

The accurate conditions showed near-ceiling performance regardless of verification or indicator status (means ranging from 1.85 to 1.88 out of 2). When the AI was right, almost everyone learned the correct information whether or not they double-checked it. The accuracy manipulation itself was the dominant predictor of quiz performance, explaining 47% of the variance (η2p = .472, F = 489.0, p < .001).

The indicator's direct effect on quiz scores was not significant (F = 2.18, p = .14, η2p = .004). There was a directional trend favoring the indicator conditions (mean difference of about 0.07 points), but it's small and unreliable. The indicator didn't meaningfully improve knowledge outcomes.

Verification worked. Participants who checked the secondary source scored dramatically better on the quiz, especially when the AI response was wrong. But only 21% of participants took that step, and the indicator didn't increase the rate. The challenge isn't the value of verification. It's how to trigger the decision to verify.

3. The Indicator Changed Attitudes, Not Actions

Here's another interesting finding. Even though the indicator didn't change what people did, it changed how they felt about the interaction. Across the self-report measures, a consistent pattern emerged: the confidence indicator made people more critical of the AI, especially when the response was actually wrong.

The inaccurate + indicator condition had the lowest scores on every attitudinal measure: satisfaction (3.45 out of 5), willingness to reuse (2.94), and perceived accuracy (3.22). Tukey post-hoc comparisons confirmed this cell was significantly different from all other conditions on satisfaction and willingness to reuse. In fact, the homogeneous subsets analysis placed the inaccurate + indicator group entirely alone at the bottom, with inaccurate/no indicator in the middle, and both accurate conditions clustered together at the top.

The factorial ANOVAs tell the same story more precisely. For satisfaction, both factors mattered: inaccuracy lowered satisfaction (F = 39.8, p < .001, η2p = .068) and the indicator had a smaller but significant negative effect (F = 3.88, p = .050, η2p = .007). The same pattern held for willingness to reuse, where the indicator effect was clearer (F = 5.17, p = .023, η2p = .009). For perceived accuracy, the indicator effect was marginal (F = 3.13, p = .077).

Meanwhile, when the AI was accurate, the indicator had virtually no attitudinal effect. Satisfaction was 4.17 without the indicator and 4.19 with it. Reuse willingness was 3.73 and 3.68. The indicator wasn't making people distrust good AI output. It was specifically amplifying the negative response to bad output.

The indicator created a gap between attitude and action. It made people feel more skeptical when the AI was wrong, but that skepticism didn't translate into the protective behavior of actually verifying the information.

4. Everyone Thinks the Feature Sounds Useful

Regardless of condition, participants rated a hypothetical confidence disclosure feature around 3.9 to 4.0 out of 5 on usefulness. There was zero differentiation across conditions (F = 0.27, p = .84), and neither the indicator (F = 0.01, p = .93) nor accuracy (F = 0.36, p = .55) had any effect. People who just experienced the indicator found it no more or less appealing than people who never saw it.

This is a useful reminder about stated preferences. If you surveyed users about whether they'd want AI confidence ratings, you'd get a strongly positive response. But this study shows that the stated preference doesn't predict the behavioral impact. Users say they want it, but having it doesn't change what they do.

What This Means for Product Teams

The results don't argue against confidence indicators. They argue against assuming that transparency features will automatically change behavior. The indicator in this study successfully shifted attitudes in the right direction. People became appropriately more skeptical of bad AI output. But that skepticism stopped short of action.

Disclosure alone isn't enough. Telling users that a response has low confidence doesn't reliably prompt them to verify it. The indicator changed how people felt about the AI, but the friction cost of verification (even just two minutes) was enough to deter most people from acting on that feeling.

The verification pathway works when people take it. Participants who checked the secondary source scored dramatically better on the quiz, especially in the inaccurate conditions (0.65 without verification vs. 1.74 with). The bottleneck isn't the effectiveness of verification. It's triggering the decision to verify in the first place.

Stated preferences don't predict behavioral impact. Users rated the concept of a confidence feature as highly useful (~4.0 out of 5) regardless of whether they experienced it and regardless of whether the AI response was accurate. Product decisions based on feature-preference surveys alone would miss the gap between what users say they want and how they actually respond.

The design implication is that confidence indicators probably need to be paired with friction reduction on the verification side. If the cost of checking is low enough, the attitudinal shift the indicator creates might actually convert into action. Think inline source links, expandable evidence panels, or one-click fact-checking rather than a separate research task.

Connecting to Prior Research

These findings align well with what my academic work on uncertainty communication would predict. That research has consistently shown that disclosing uncertainty changes attitudes more readily than it changes behavior, and that the effects depend heavily on the type of uncertainty and the stakes of the context.

In this study, the confidence indicator is essentially a "possibility range" disclosure: it tells users how certain the AI is, without introducing expert conflict or contradiction. My published work has found that this type of uncertainty is generally harmless or even positive for credibility perceptions. And indeed, the indicator didn't make people trust the AI less when it was right. It only amplified skepticism when the content was actually problematic, which is exactly the differential pattern that prior work would predict.

The harder question from the literature has always been whether attitudinal effects translate into downstream behavior. In climate communication, public health, and financial risk contexts, the answer is usually "less than you'd hope." This study extends that finding to AI interfaces: knowing that the AI is uncertain is not sufficient to overcome the behavioral inertia of accepting its output at face value.

Limitations and Next Steps

The most important limitation is ecological validity. In this experiment, participants were evaluating AI responses to questions they didn't personally ask. They were reading about vitamin D or earthquake prediction because I assigned them to, not because they woke up wondering about it. In a real-world interaction, a user's intrinsic motivation to get the right answer would be much stronger. Someone planning their actual vitamin D regimen cares a lot more about accuracy than someone answering quiz questions for a $0.50 bonus.

That difference in motivation could plausibly amplify the indicator's behavioral effect. If you genuinely need the answer to be right, a low-confidence flag might tip you over the edge into verification in a way it didn't in this experiment. Or it might not, and the inertia of accepting AI output might be even stronger when users are in a flow state and trying to get things done quickly. The honest answer is that this study can't distinguish between those possibilities.

The next step is testing in a more realistic environment. That means building a functional AI interface with a confidence disclosure feature and observing how people interact with it on questions they actually care about. Think-aloud usability testing with 8 to 12 participants using a high-fidelity prototype would capture the qualitative texture that this quantitative study can't: what do people actually look at, what do they ignore, what would make them click through to verify? The quantitative study tells us that disclosure alone is insufficient. The qualitative follow-up would tell us what "sufficient" looks like.

This study was designed and executed independently using Qualtrics for survey programming and Prolific for participant recruitment. The analysis pipeline was built in R (tidyverse, car, emmeans) with a reproducible script that regenerates all results and an HTML summary when new data is added. The confidence indicator stimuli, secondary source materials, and comprehension assessments were all developed specifically for this study.